Databricks Sql Documentation

Databricks Sql Documentation - Latest car reviews provide valuable insights for buyers looking to make smart decisions. They showcase the newest models, presenting their design, features, driving capability, and tech. By reviewing various aspects, such as mileage, interior quality, and safety ratings, reviews help potential owners compare vehicles effectively.

In-depth reviews also include test drive feedback and professional evaluations to give a practical view. They often discuss pricing, trim options, and after-sales support to guide buyers toward the right purchase. With regularly updated reviews, enthusiasts and consumers can stay informed about trends and innovations in the automotive industry.

Databricks Sql Documentation

Databricks Sql Documentation

Nov 11 2021 nbsp 0183 32 First install the Databricks Python SDK and configure authentication per the docs here pip install databricks sdk Then you can use the approach below to print out secret Jun 21, 2024 · The decision to use managed table or external table depends on your use case and also the existing setup of your delta lake, framework code and workflows. Your …

databricks

What Are Schemas In Databricks Databricks Documentation

Databricks Sql DocumentationSep 29, 2024 · EDIT: I got a message from Databricks' employee that currently (DBR 15.4 LTS) the parameter marker syntax is not supported in this scenario. It might work in the future … Nov 9 2023 nbsp 0183 32 To create new clusters user needs to have either Have permission to use a cluster policy This is a recommended way of giving users the ability to create new clusters DLT Jobs

Nov 6, 2024 · Answering your two sub questions individually below: Does this mean that databricks is storing tables in the default Storage Account created during the creation of … Best Practices To Manage Databricks Clusters At Scale To Lower Costs Sync Write Queries And Explore Data In The SQL Editor Databricks Documentation

Databricks Managed Tables Vs External Tables Stack Overflow

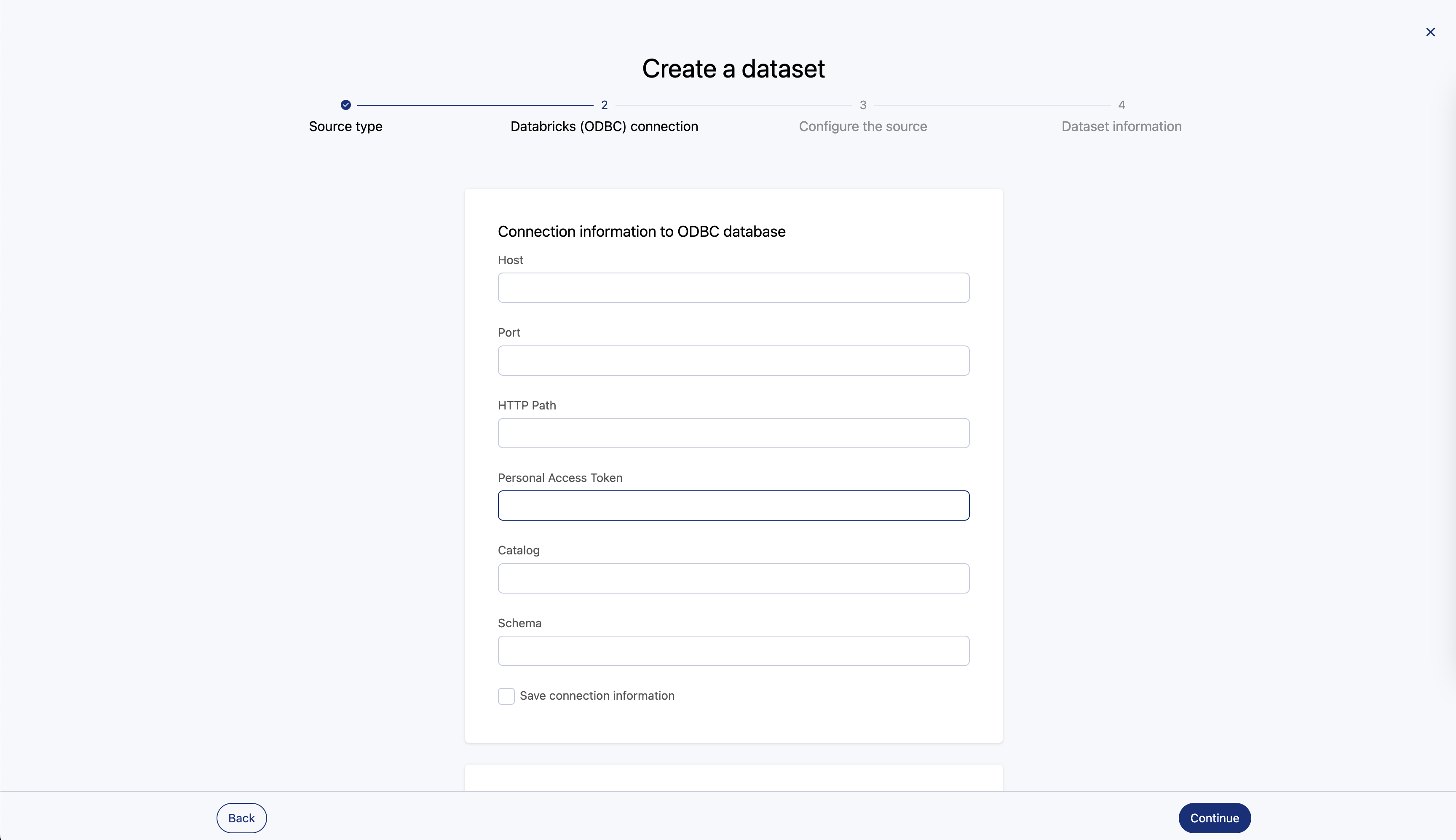

Databricks Connector

Databricks is smart and all but how do you identify the path of your current notebook The guide on the website does not help It suggests scala dbutils notebook getContext notebookPath Unity Catalog Data Savvy

Jun 9 2025 nbsp 0183 32 I am trying to get the job id and run id of a databricks job dynamically and keep it on in the table with below code run id self spark conf get quot spark databricks job runId quot quot no ru User defined Functions UDFs In Unity Catalog Databricks Documentation Get Started Guides Databricks Community

Stop Wasting Time And Money On Tasks That AI Can Be Doing Instead Here

How To Use Databricks SQL for Analytics on Your Lakehouse iteblog pdf

Databricks Login

Databricks Login

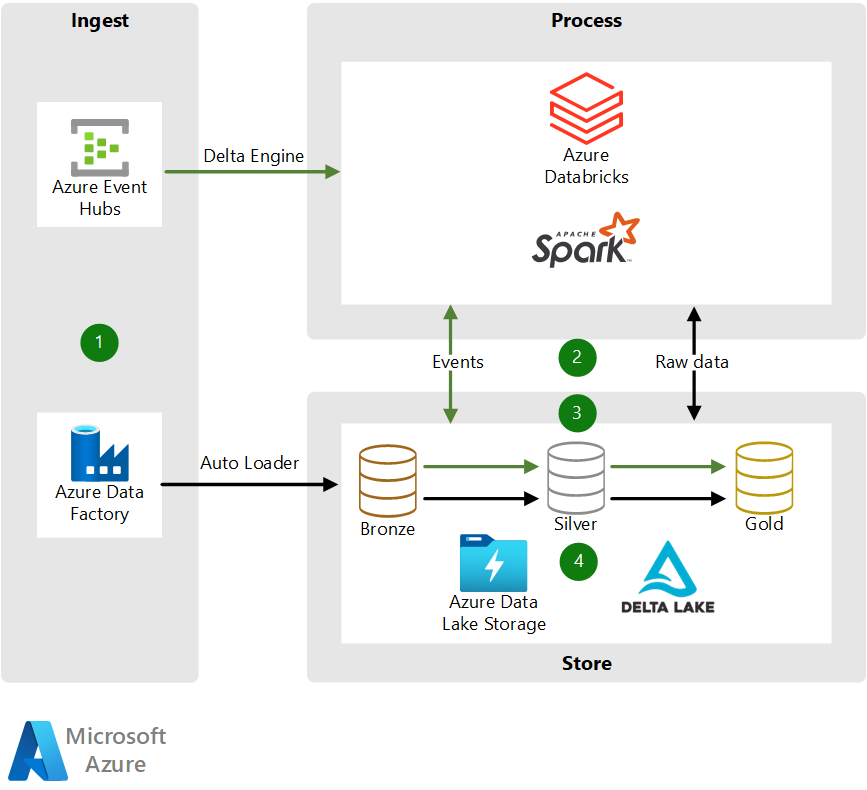

Pipe Data

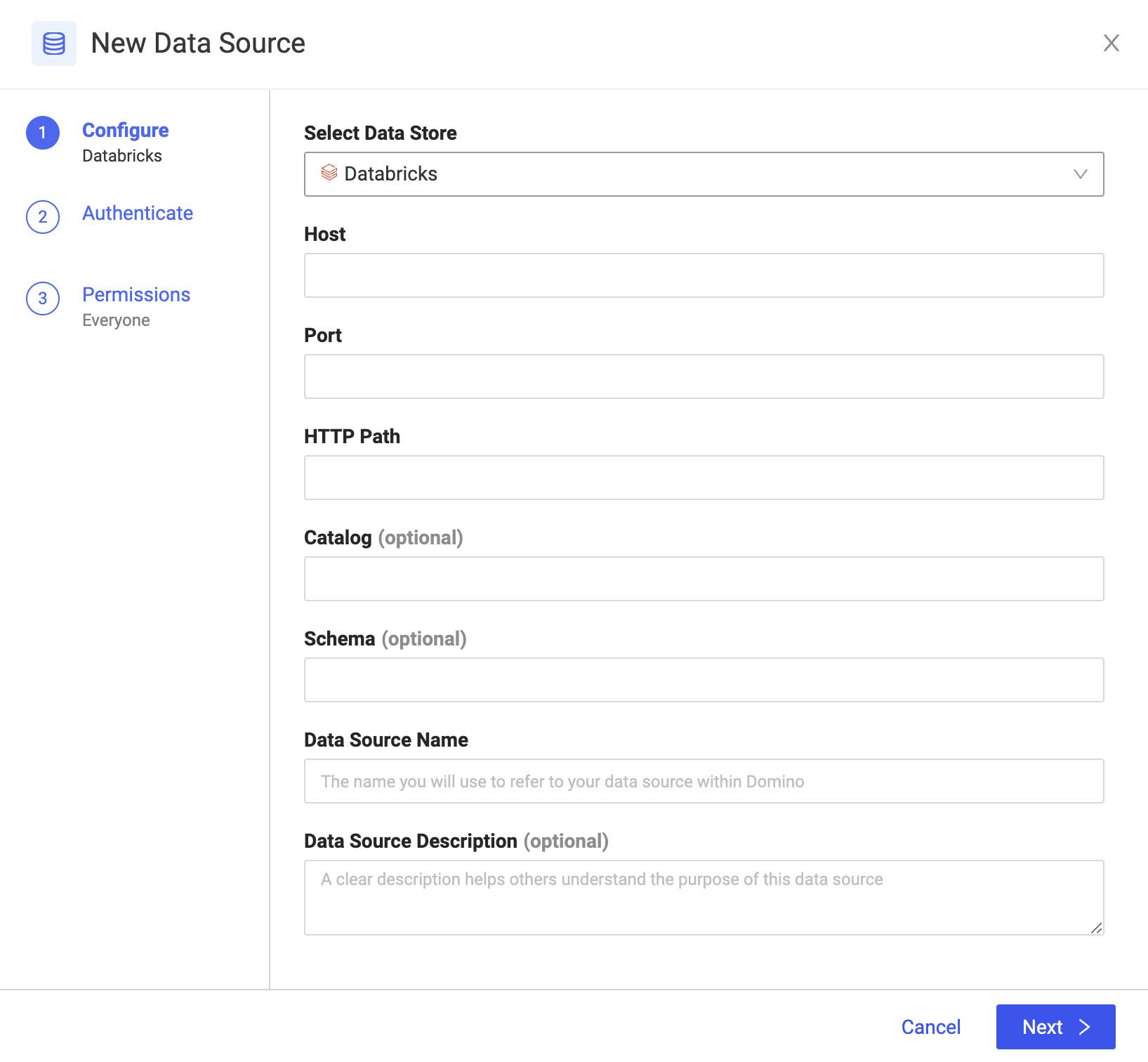

Modal With Input Parameters For The Databricks Data Source

Adobe Firefly Adobe AI AI

Unity Catalog Data Savvy

Integrating Gradient Into Apache Airflow Sync

Trek Bicycle Uses Databricks And Qlik To Unify Point of sales Data